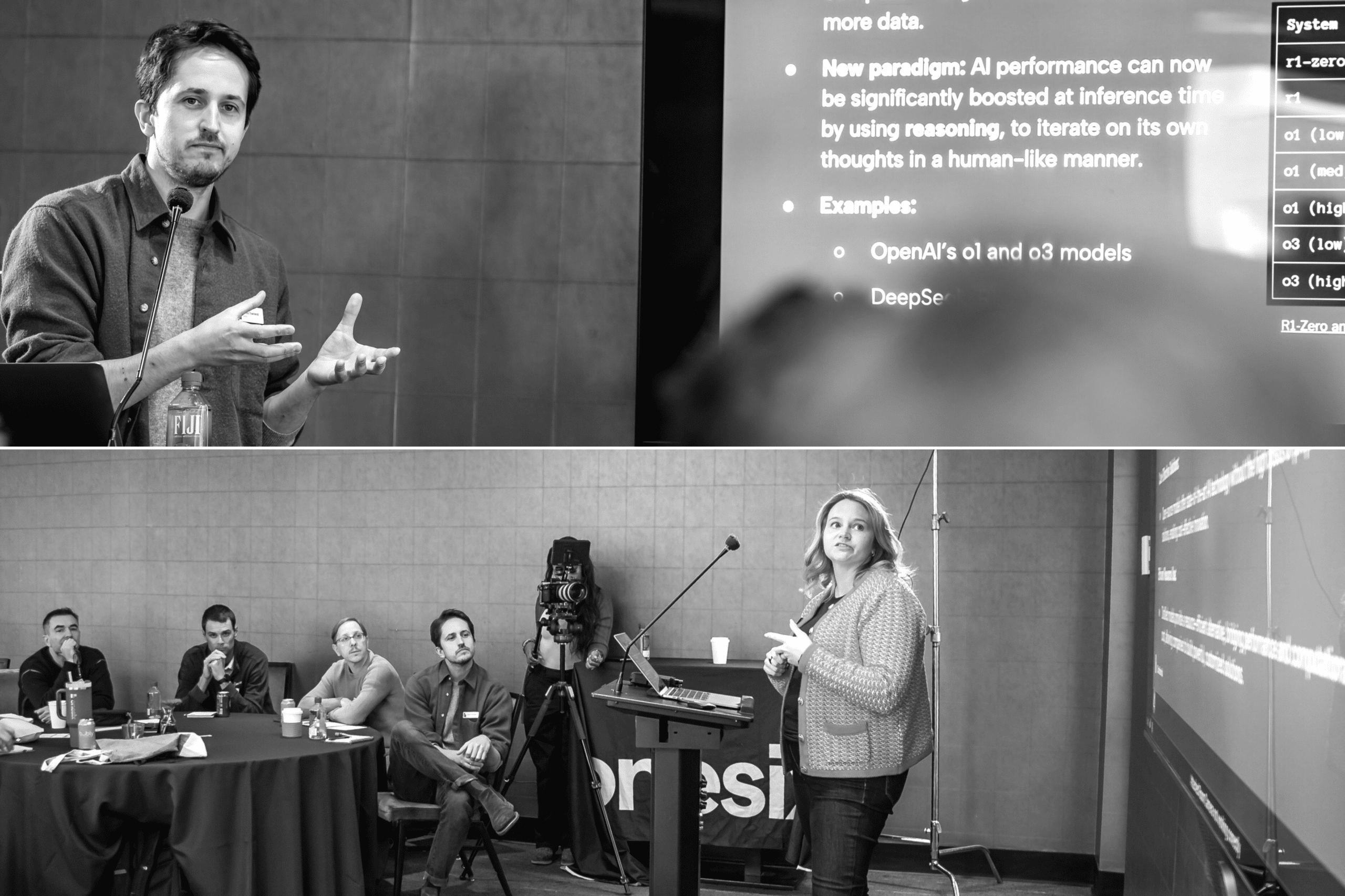

Agentic AI Is a Systems Design Problem

This is Part 3 of a three-part series:

- Part 3: Agentic AI Is a Systems Design Problem

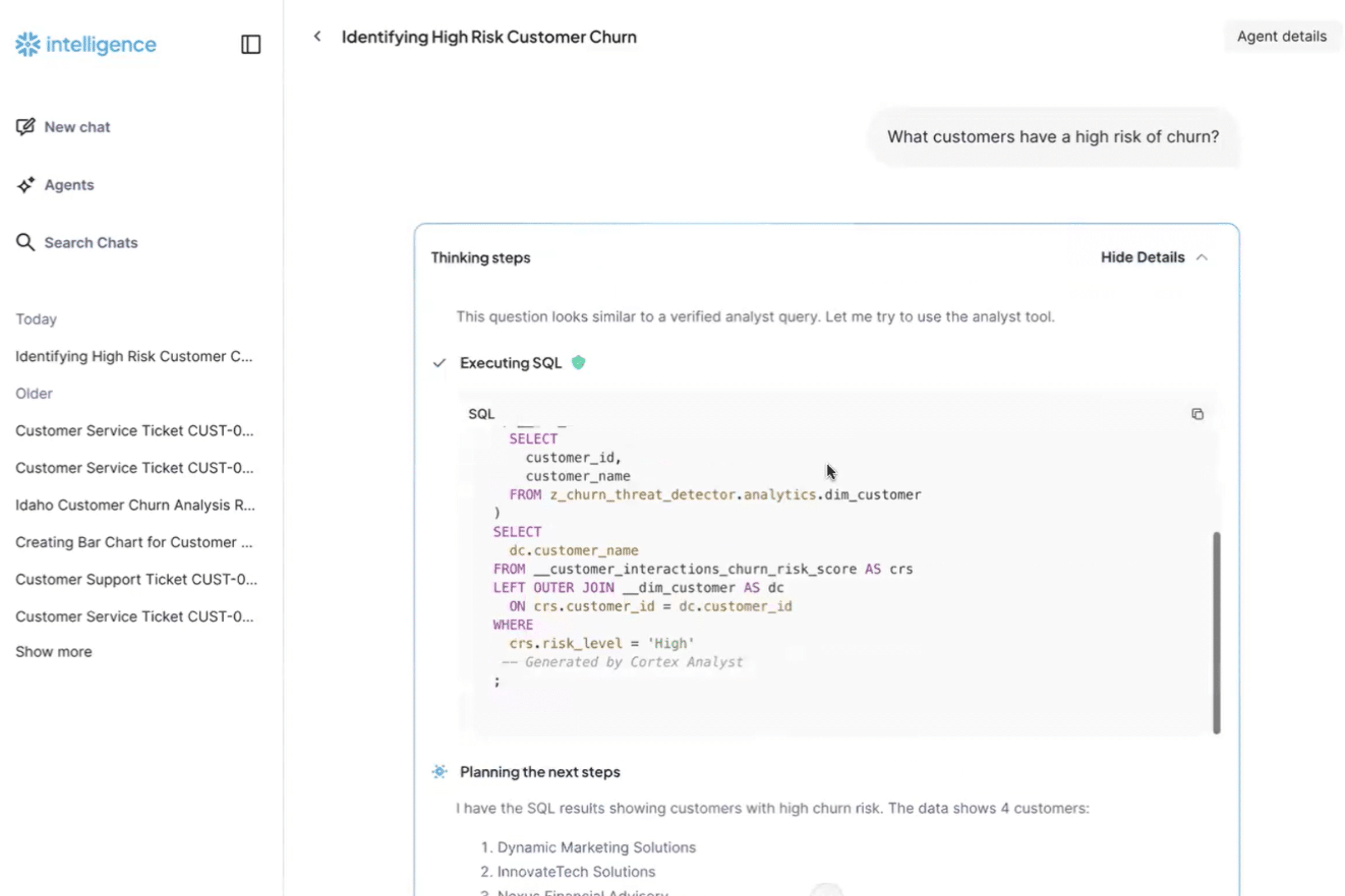

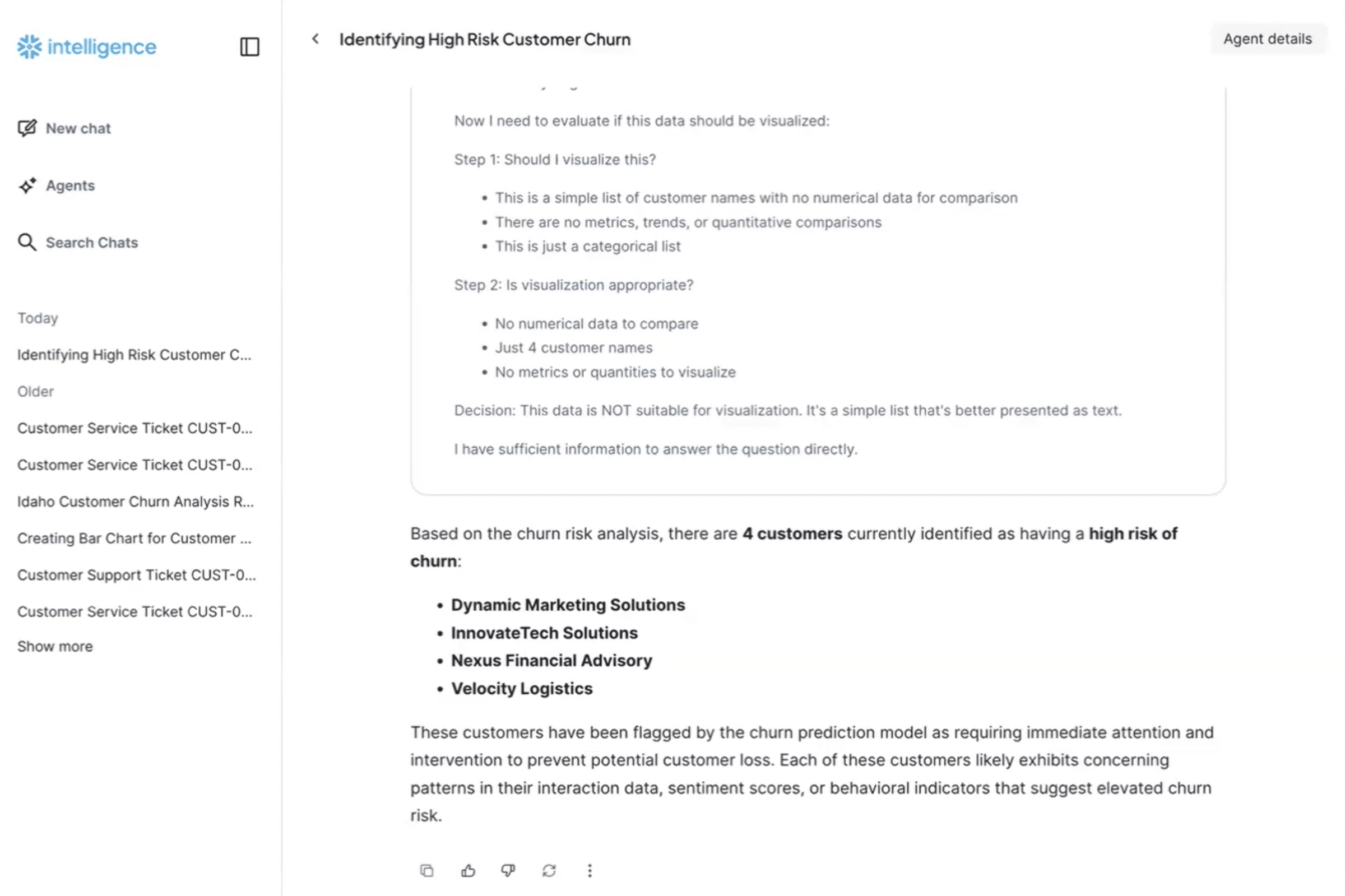

Most agent demos look the same: one capable LLM, a handful of tools, and a happy-path workflow that works–once. Maybe even a few times. Then it gets shown to stakeholders and the question becomes: can we ship this?

This is where most teams get stuck.

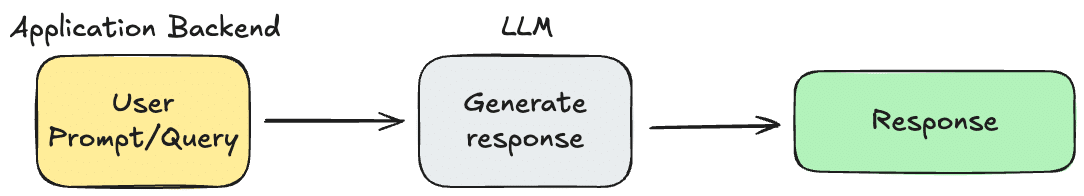

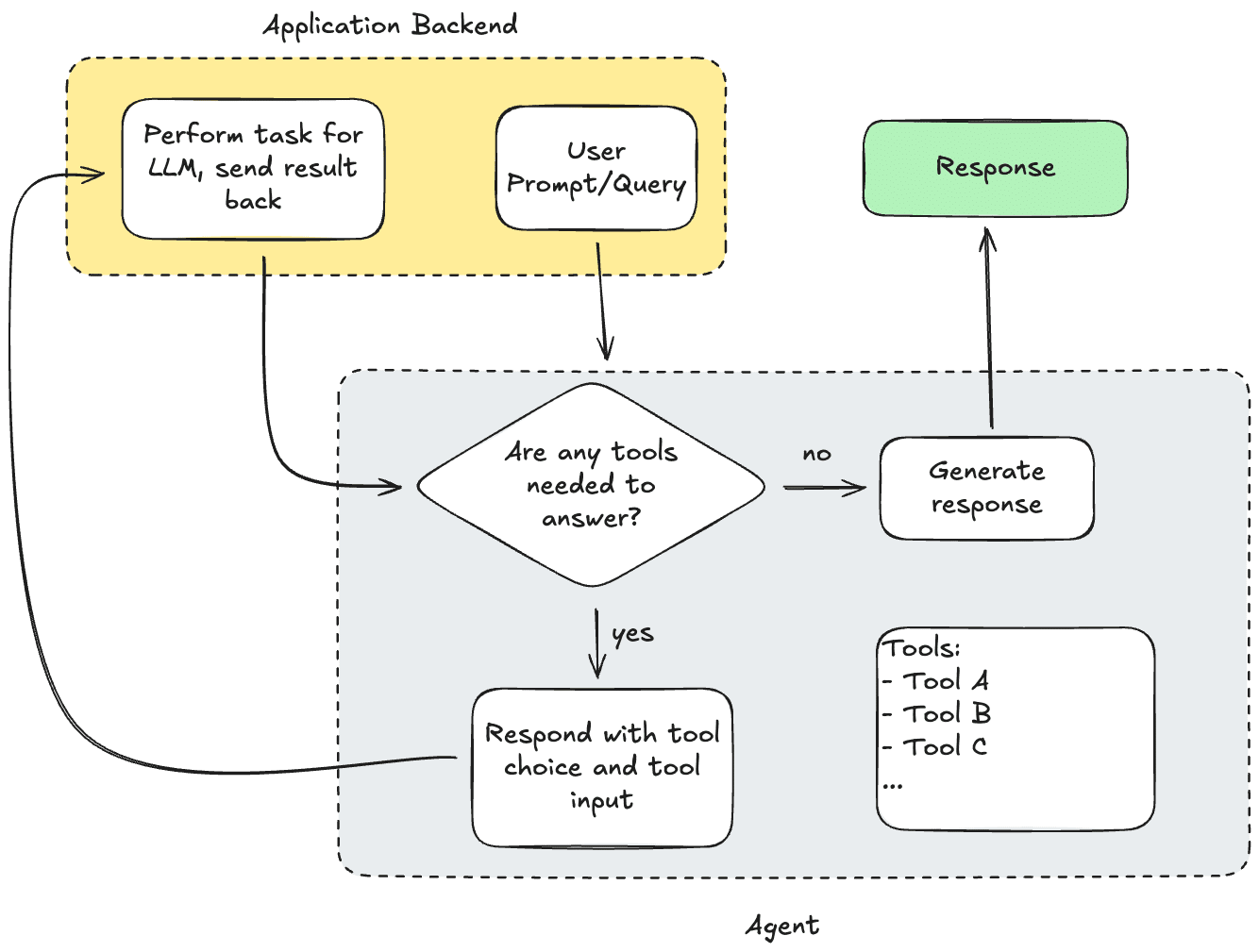

The gap between a compelling agent demo and a production-ready agentic system isn’t about prompt quality or model choice. It’s about systems design. In production, an agent is not a clever script–its a distributed, stateful system that must be repeatable, observable, secure, and cost-bounded while operating under uncertainty.

The prototype mindset optimizes for capability: can the agent do the thing?

The production mindset optimizes for reliability: can the system do the thing a thousand times a day, across teams, without surprises?

Nearly every production decision for agentic AI falls into three buckets:

- How the agent operates (design patterns)

- What you build vs what you buy (architecture and ownership)

- How you observe, debug, and control cost (non-negotiables)

Design Patterns: How Your Agentic System Actually Operates

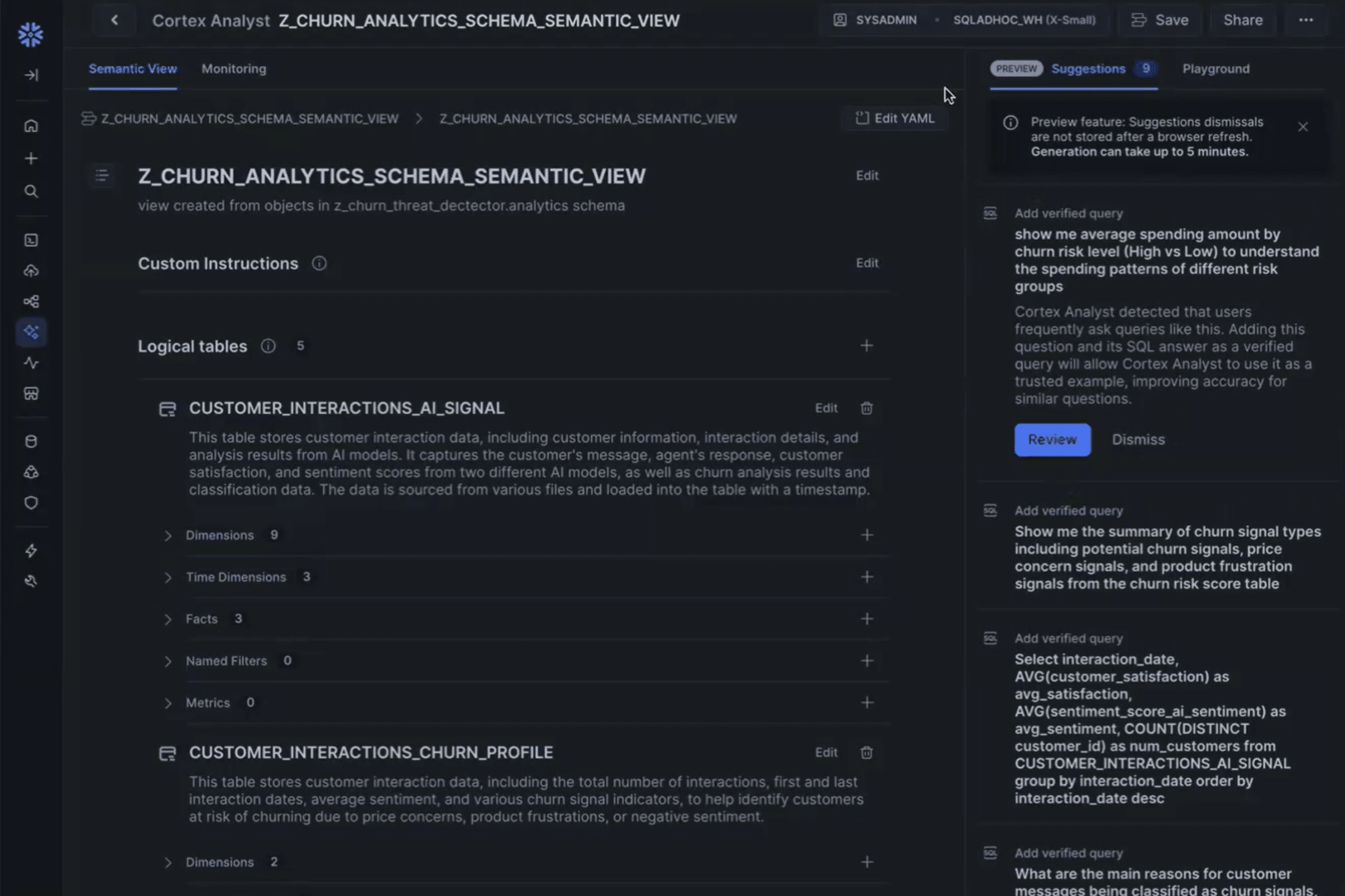

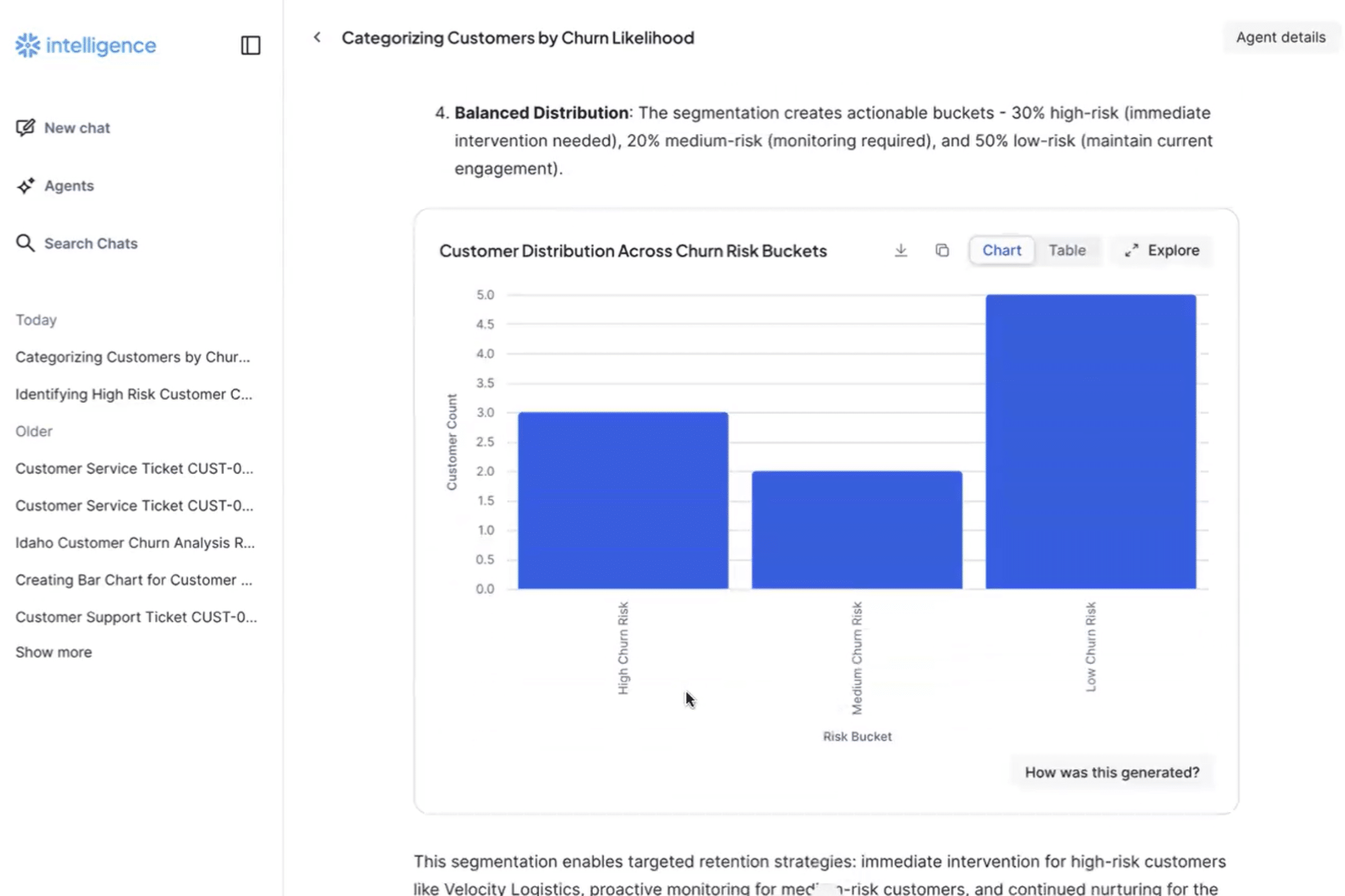

Choosing an agent design pattern is a structural decision guided by the business objective and determines your system’s failure modes, debuggability, and operational burden. Throughout 2025, the community shifted away from “monolithic” prompts toward specialized multi-agent coordination. By breaking complex tasks into discrete roles, your system can achieve higher reliability and easier maintenance.

Below, we explore four common patterns of agentic architecture, moving from rigid structured workflows to highly decentralized systems, before examining the specific role–and risks—of the single-agent model.

The Orchestrator Pattern

The supervisor agent plans tasks, delegates to tools or sub-agents, and aggregates results.

Best for: workflows that must be predictable, auditable, and easy to reason about

Tradeoffs:

- Easier to trace and debug

- Centralized decision-making simplifies governance

- Planning agent becomes a critical dependency

This pattern maps cleanly to traditional workflow engines and is often the easiest to productionalize.

Agentic Investment Research Engine

Real-World Examples

Customer Support System

A triage agent receives a support ticket, classifies the issue type (network, billing, access), and routes it to the appropriate specialist agent. The triage agent doesn’t handle the ticket itself–it decides which expert to consult. This mirrors human support centers where a receptionist routes calls to technical support, billing teams, and account management.

Multi-Agent Investment Analysis

A coordinator agent receives a ticker symbol and dispatches it to four parallel specialist agents simultaneously–fundamental analysis (financial statements), technical analysis (price patterns), sentiment analysis (news/social signals), and ESG evaluation. The coordinator aggregates their independent insights into a single investment recommendation.

The Specialized Pipeline Pattern

A fixed sequence of specialized agents (e.g., retrieve → analyze → decide → act).

Best for: well-defined business processes like document extraction, intake triage, or approval flows.

Tradeoffs:

- Clear contracts between stages

- Easier testing and partial re-runs

- Less flexible when workflows need dynamic branching

This pattern shines when the business process already exists and the agent augments it, rather than replaces it.

Real-World Examples

Contract Draft Pipeline

A law firm’s document system chains four agents in sequence:

- Template selection: receives contract type, jurisdiction, and party information; selects the appropriate base template from the firm’s library

- Clause customization: takes the template and modifies standard clauses based on negotiated business terms (payment schedules, liability caps, etc)

- Regulatory Compliance: reviews the customized contract against applicable laws and industry regulations

- Risk Assessment: performs comprehensive liability analysis and provides risk rating with protective language recommendations

Contract Draft Pipeline

Asynchronous Event-Driven Agents

Agents subscribe to events and react asynchronously (ticket created, invoice posted, alert fired).

Best for: high-volume operational environments where agents augment existing systems

Tradeoffs:

- Scales naturally with event streams

- Requires strong idempotency and state management

- Debugging spans time, services, and retries

This pattern treats agents as probabilistic microservices–powerful, but operationally demanding.

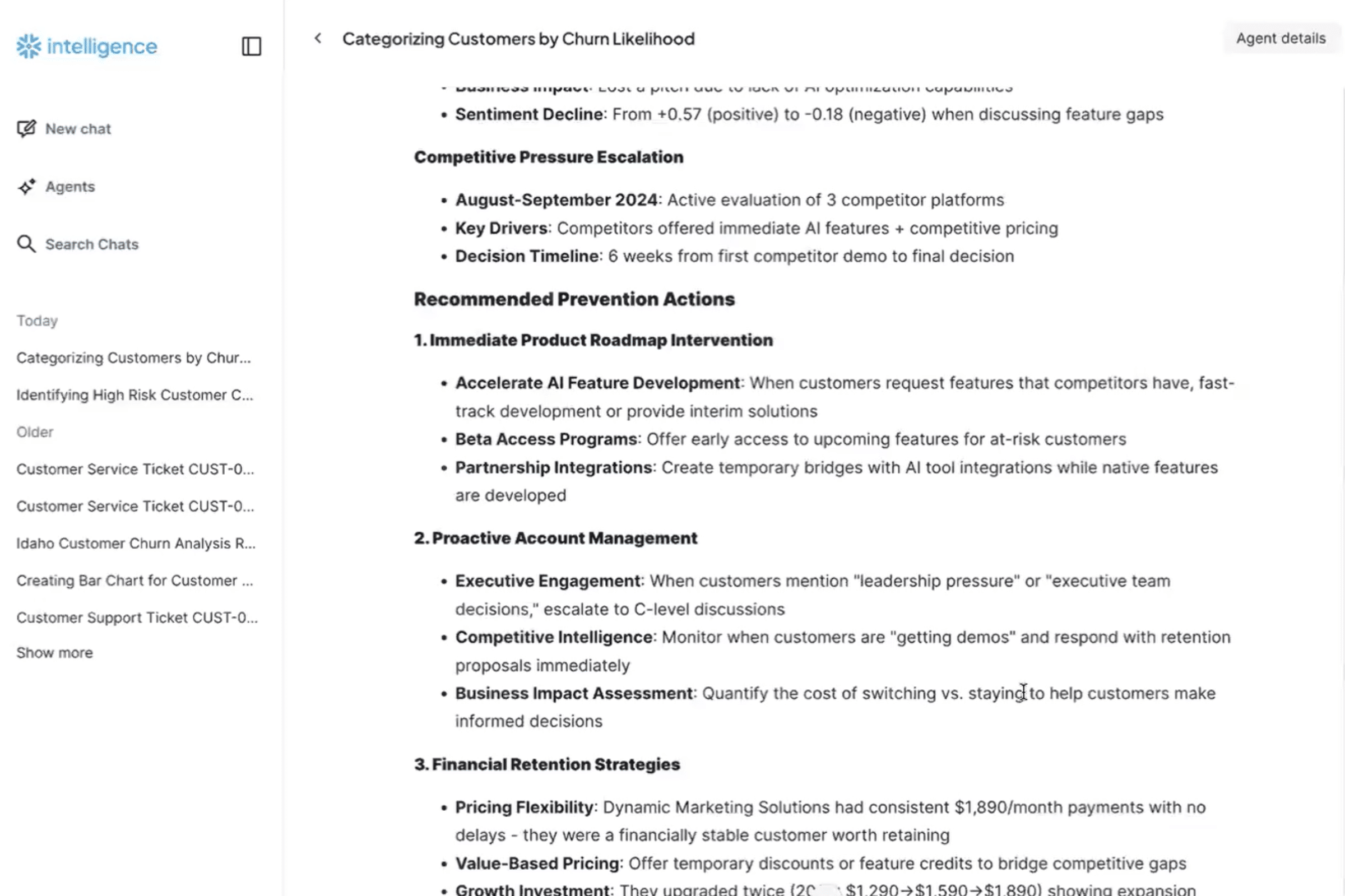

Agentic Lead Intelligence

Real-World Examples

Self-Healing IT Infrastructure

When system logs and metrics arrive via Kafka topics, multi AI agents respond in parallel without explicit orchestration:

- Anomaly detection agent watches for error spikes and raises severity flags

- Auto-mitigation agent attempts standard remediation (restart service, clear cache, rollback deployment)

- Escalation agent pages on-call engineers if severity exceeds threshold

- Communication agent updates incident dashboard and posts to slack

Each agent subscribes independently to the same event stream. No centralized coordinator decides which agent should act–they all react based on their internal logic.

Lead Intelligence for Sales Teams

When new lead emails arrive in the inbox, multiple agents react asynchronously to enrich the opportunity:

- Lead qualification agent computes fit score and budget indicators from the prospect’s email domain and message content

- Sale intelligence agent researches the prospect’s company, recent news, and competitive context

- Quote recommendation agent generates personalized pricing and product bundle suggestions based on company profile and message content

- Outreach preparation agent drafts position-specific talking points and identifies relevant case studies

All execute concurrently without blocking the email system. By the time a sales rep opens the lead, they have a complete intelligence package—prospect research, competitive context, recommended quote positioning, and talking points—dramatically shortening sales cycles.

The Single “Generalist” Agent

One agent with many tools and an ever-growing prompt.

Best for: proofs-of-concept, demos, and narrow use-cases

Reality: while often the starting point for developers, this pattern faces a fundamental scaling wall as workloads grow in complexity.

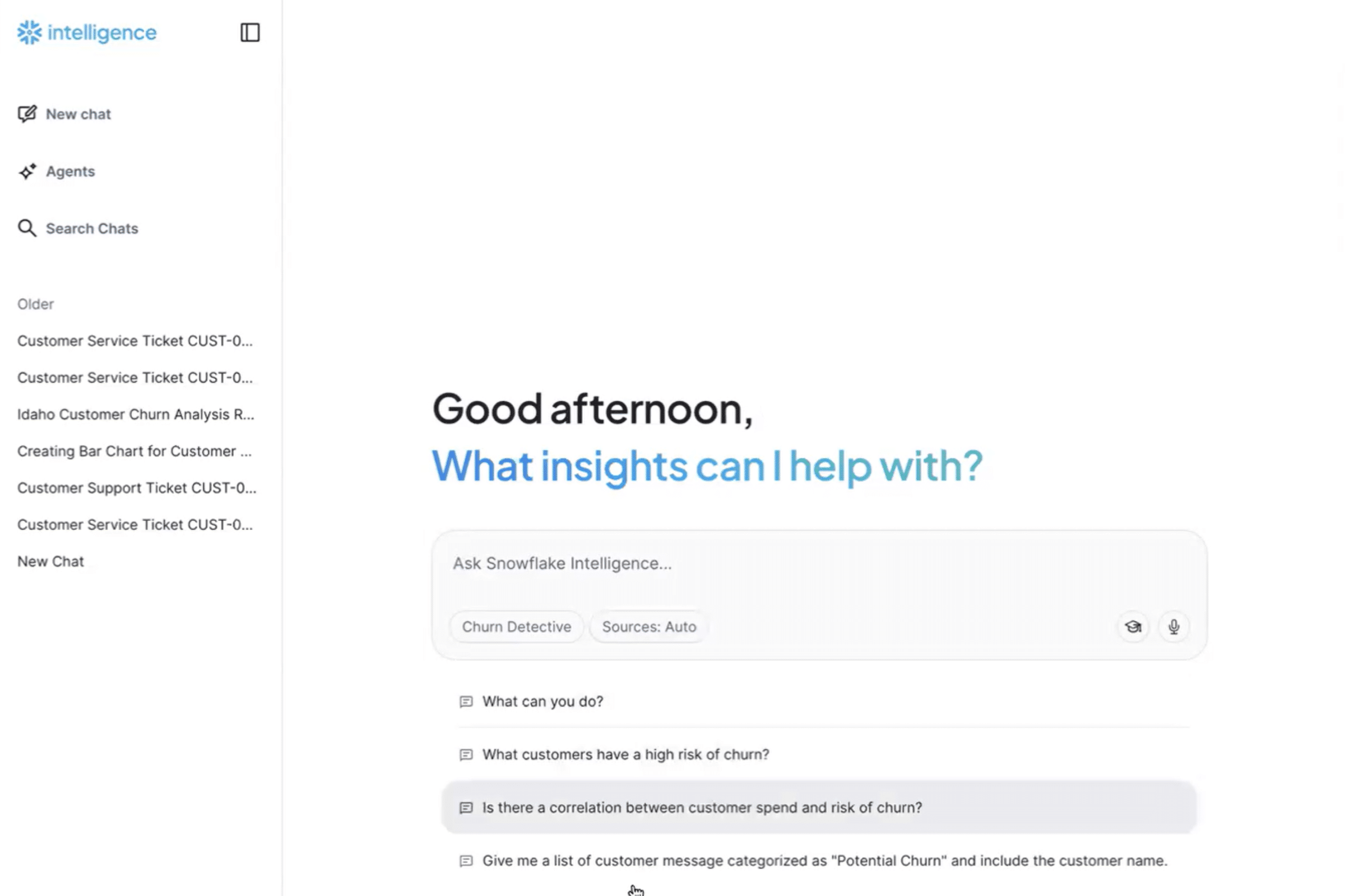

Platform Copilot

Real-World Examples

Coding Assistants and Platform Copilots

The single agent pattern works well in constrained domains like basic coding assistants or platform copilots—they operate synchronously, reasoning through tasks, and have a defined set of tools and actions. However, once behavior becomes complex, debugging turns into archaeology. Single agents struggle with:

- Context window saturation: accumulating conversation history and tool results quickly exhausts token budgets

- Tool selection chaos: With 20+ tools available, the agent wastes inference cycles selecting and describing unsuitable options

- Error propagation: A single poor decision cascades through the entire workflow with no validation layer

It is important to note that the Single Agent is not “bad” architecture–in fact, when applied to the right problem, it is incredibly powerful. Agentic coding tools like Claude Code are immensely successful at executing complex, iterative technical tasks. Just don’t expect it to help with qualifying inbound leads.

Choosing a pattern is choosing how your system fails–and how easily humans can understand why.

The decision tree is straightforward:

- Fixed, sequential process with known dependencies? → Pipeline pattern

- Need dynamic routing based on request type? → Orchestrator pattern

- Narrow, bounded tasks with a clear toolset? → Single agent

- High-volume, real-time, decoupled responses? → Event-driven pattern

The best systems often blend patterns: a mostly deterministic pipeline with one orchestrator step for routing, or an event-driven system with internal pipeline stages within each agent. The key is matching pattern choice to workload characteristics–not architecture preferences.

Build vs. Buy Is an Architectural Decision

Like any emerging technology, early adopters built everything from scratch because they had to. In early 2026, the build-vs-buy debate shifted to a question about architectural ownership. When you choose a path, you aren’t just choosing a vendor; you are choosing where complexity–and long-term maintenance–should live.

When Buying Makes Sense

Buying a platform is often the right call when you need:

- A production-grade orchestrator quickly

- Common patterns like copilots, internal search, or ticket triage

- High-volume, real-time, decoupled responses? → Event-driven pattern

Benefits: Faster time-to-value, fewer sharp edges, and fewer homegrown control planes

When Building is Justified

Building in-house makes sense when:

- Agent behavior is strategic IP

- Workflows are deeply tied to proprietary systems or data

- You already operate distributed, stateful systems with mature MLOps

Building means owning the failure modes–intentionally.

The Hybrid Reality

Most successful teams land here: buy the orchestration, monitoring, and compliance layer; build domain-specific agents, tools, and logic on top.

Build-vs-buy is about choosing where you want to innovate–and where you want leverage.

Observability, Traceability, and Cost: The Non-Negotiables

If you add observability after your agent is “working,” you aren’t building a production system–you’re running an extended prototype. In a world of non-deterministic outputs, logs are no longer enough; you need traces.

Opening the Black Box

Production agents operate in a “block box” of reasoning. To debug them, you need per-request traces that act as a forensic transcript. You must be able to reconstruct:

- The Path: which agent took the lead, and which tools were invoked?

- The Context: what specific snippet of data triggered a “hallucination” or wrong turn?

- The Logic: what was the internal “thought process” (CoT) before the action?

Without this you cannot answer “why did it do that?” or debug failures.

Metrics That Actually Matter

Once the system is live, move past trivial metrics like “total tokens” and focus on operational health:

- Task Success Rate: not “did it finish?”, but “did it achieve the user’s goal?”

- Tool Reliability: which API or tools is the “weak link” causing agent retries?

- Step Latency: where is the agent “looping” or thinking too long?

- Behavioral Drift: Is the model’s reasoning changing as the underlying LLM is updated by the provider?

Cost as a Design Constraint

In agentic systems, cost is not an afterthought—it is a limiting reagent. Multi-agent loops can exponentially increase token consumption if left unchecked.

To stay profitable, production systems must implement:

- Context Pruning: aggressively summarizing or filtering message history

- Hard Stop Rails: capping the maximum number of “turns” an agent can take before it must ask a human for help.

- Model Tiering: routing simple classification tasks to “small” models and reserving “frontier” models for complex reasoning.

The Bottom Line: if you cannot explain exactly why an agent took an action or why a specific request cost $2.00, you aren’t ready for production.

A Simple Production Readiness Checklist

Before calling an agentic system “production”:

- Pattern: Do we know which agent design pattern we’re using–and why?

- Platform: Have we consciously decided what we buy vs. build?

- Visibility: Can we trace any request and explain any decisions?

- Cost: Can we predict, measure, and control spend per outcome?

If any of these are unclear, the system may work–but it won’t scale safely.

Written by

Francisco González, Director of AI/ML

Published

March 18, 2026