Data and AI Horizon 2026: The Enterprise Readiness Imperative

Why 2026 Is a Turning Point for Enterprise AI

By early 2025, AI crossed a critical threshold. What was once experimental became practical, accessible, and embedded into real business workflows. As organizations move into 2026, the challenge has shifted again.

AI success is no longer limited by technology. It is limited by enterprise readiness.

Insights from Snowflake’s AI + Data Predictions 2026 make this clear: while AI capabilities continue to advance rapidly, most organizations struggle to translate those advances into consistent, enterprise-wide impact without strong data foundations, governance, and operating models.

Several forces accelerated this shift:

- Maturity of AI models: Foundation models evolved from experimental tools into enterprise-ready systems with multimodal and reasoning capabilities, enabling more reliable real-world deployment.

- Low-shot and zero-shot learning: Organizations can now achieve meaningful AI outcomes without massive proprietary datasets, significantly lowering the barrier to adoption.

- AI embedded into business processes: AI moved beyond analytics and copilots into end-to-end workflows, reshaping how entire functions operate.

- The rise of agentic AI: AI is evolving from tools that assist humans to systems capable of goal-driven action: coordinating tasks, reasoning through decisions, and operating with reduced supervision.

- Maturation of LLM infrastructure: While protocols like the Model Context Protocol (MCP) emerged in late 2024, 2025 was the year they gained real enterprise traction, alongside agent orchestration frameworks, LLM gateways, and observability tools. Together, these advances helped standardize how AI systems connect to data, tools, and workflows.

These advances unlocked new opportunities, but they also exposed foundational gaps in data quality, governance, and enterprise operating models.

The Evolution of AI: 2016 → 2025

To understand why enterprise readiness matters so much in 2026, it helps to look at how quickly AI has evolved over the past decade.

Early advances between 2016 and 2020 laid the technical foundation. Breakthroughs like Transformers, BERT, and early GPT models showed that large-scale machine learning could generalize across tasks. From 2021 to 2022, progress accelerated as models such as Codex, DALL·E, and GPT-3.5 demonstrated clear business value through code, image, and text generation.

The real inflection point came in 2023 and 2024. Generative AI moved into the mainstream, driven by models like GPT-4, Claude, Claude 3, and Claude 3.5 Sonnet. AI shifted from experimentation to everyday use, becoming embedded in workflows—particularly across software development.

That shift accelerated further in 2025. Tools like Claude Code saw rapid adoption and feature expansion, signaling a move from AI-assisted coding to AI-native development. Capabilities pioneered by OpenAI Codex were increasingly absorbed into general-purpose models, speeding adoption across engineering teams.

This period also marked a move beyond text. Multimodal and generative video models—including Sora, Sora 2, and Google’s Veo 3—showed that AI could reason and generate across visual, audio, and temporal inputs, expanding enterprise use cases well beyond language and analytics.

AI is now embedded in core business processes. Automation and augmentation are operational, supported not only by more capable models (Claude Opus 4.5, GPT-4.5–5.2, Gemini 3, DeepSeek-R1, DeepSeek V3.2), but by maturing infrastructure designed for scale.

Tooling for agentic systems also advanced rapidly. Frameworks such as OpenAI’s Agents SDK, emerging Claude agent tooling, and protocols like MCP helped standardize how AI systems plan, execute, and interact with enterprise data and tools. Combined with orchestration layers, LLM gateways, and observability tools, these advances made AI systems easier to deploy, govern, and monitor in production.

The takeaway is clear: model innovation is no longer the constraint. The challenge for 2026 is execution—building the platforms, data foundations, and governance required to scale AI across the enterprise.

Trends Defining Enterprise AI in 2026

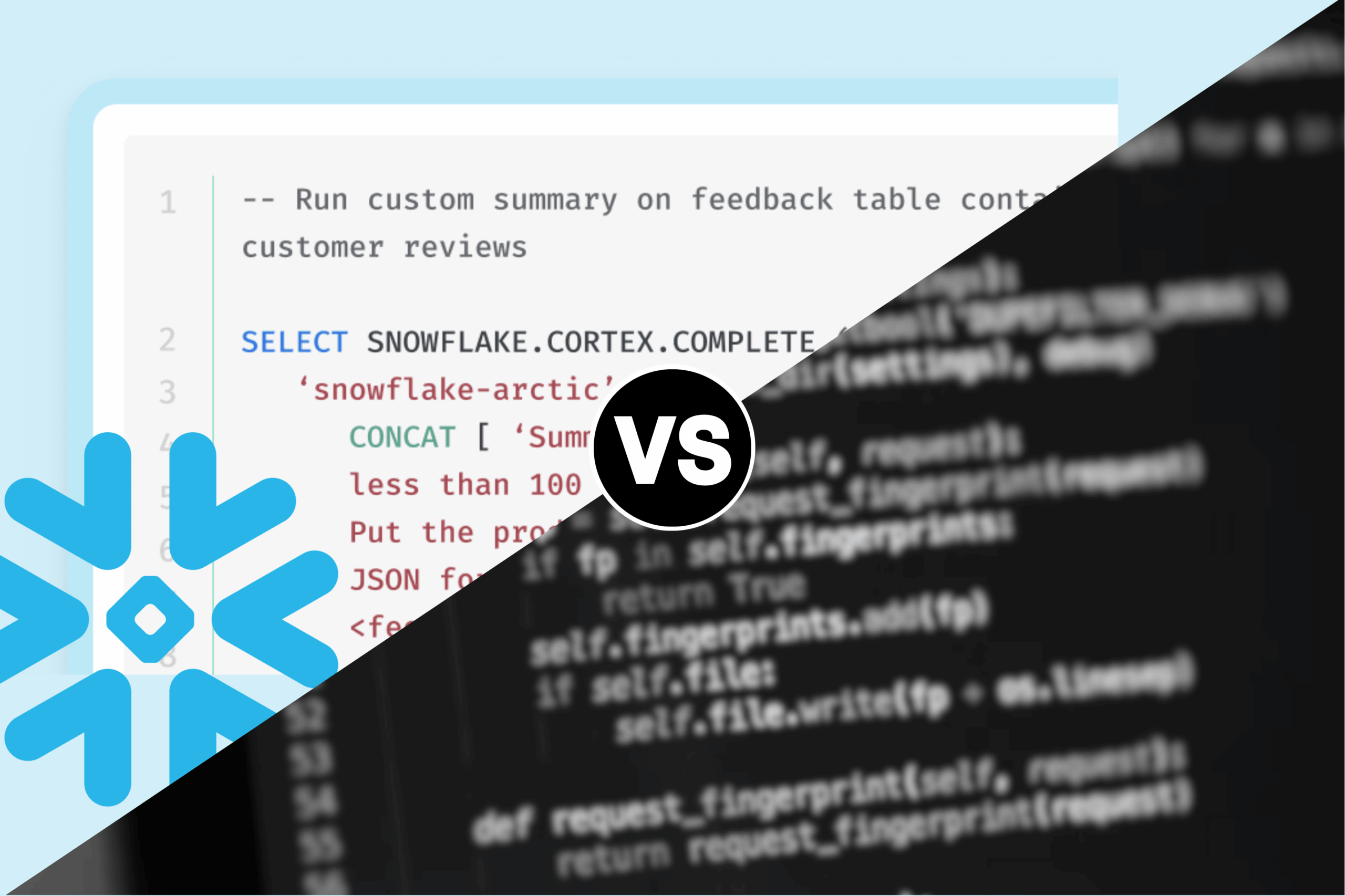

Enterprise-Ready AI Platforms

Foundation models are now the backbone of modern AI strategies. Snowflake’s report emphasizes that large language and reasoning models have matured to the point where they can support production workloads across industries, if enterprises are prepared to operationalize them.

Models such as GPT, Gemini, Claude, and Llama now offer:

- Multimodal intelligence across text, images, and audio

- Reasoning capabilities suitable for complex decision support

- Zero- and few-shot learning, reducing training requirements

- Broad accessibility via APIs and open-source ecosystems

In 2025, these capabilities made AI experimentation easy. In 2026, they enable platform-based AI adoption.

This shift was supported by the maturation of LLM infrastructure:

- Standardized protocols like MCP made it easier for models to interact with enterprise data and tools

- Agent orchestration frameworks enabled coordination across multiple AI components

- LLM gateways and observability tools improved security, reliability, and cost control

Together, these advances allowed enterprises to move beyond isolated implementations toward repeatable, governed AI platforms. Leading organizations are now:

- Standardizing on shared AI platforms

- Reusing models across teams and functions

- Embedding AI directly into data, analytics, and application layers

Snowflake highlights this shift as a move from isolated AI wins to connected AI ecosystems, where value compounds across the enterprise. This transition—from projects to platforms—is what enables scale, consistency, and governance.

Industry Callouts

Financial Services

Snowflake predicts a return to a data-first mindset, with AI platforms supporting fraud detection, compliance, and customer intelligence, while maintaining auditability and regulatory controls.

Healthcare & Life Sciences

Enterprise-ready AI platforms enable clinical documentation, medical research, and analytics, while respecting strict governance and privacy requirements.

Manufacturing

AI platforms power quality inspection, predictive maintenance, and operational analytics across plants and supply chains, building on years of foundational data work.

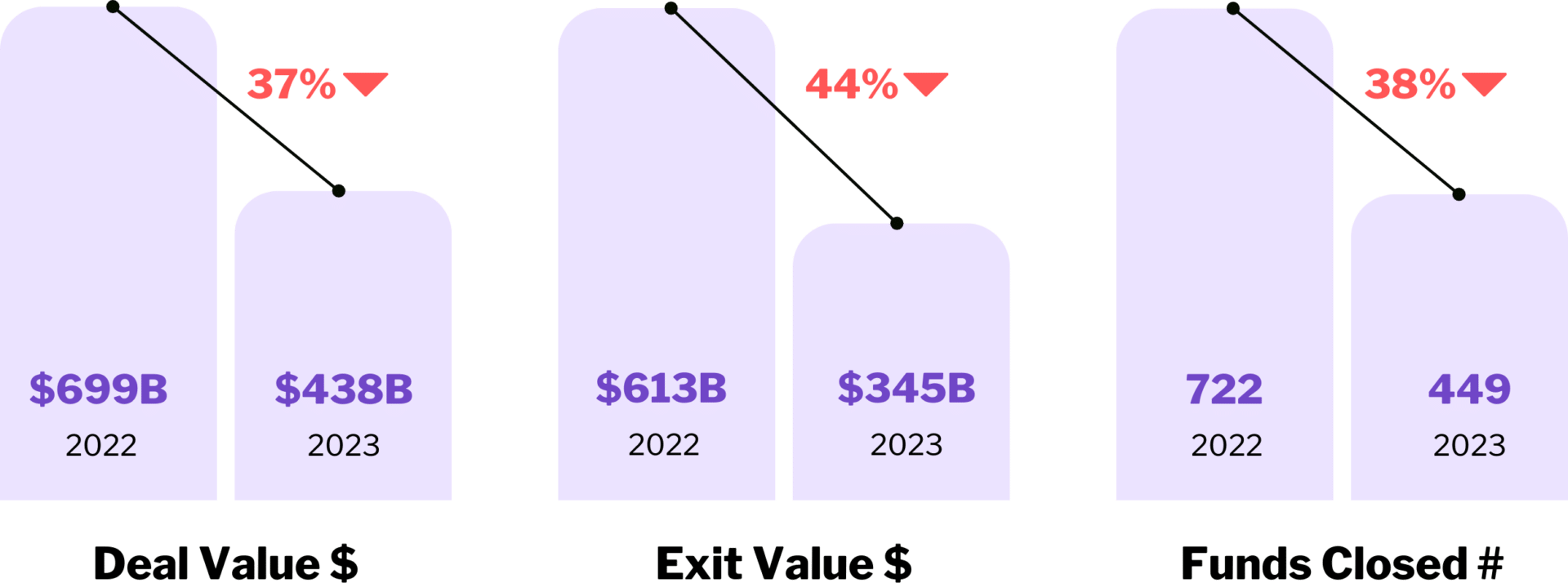

Private Equity

Shared AI platforms accelerate diligence, portfolio monitoring, and value-creation initiatives across multiple investments.

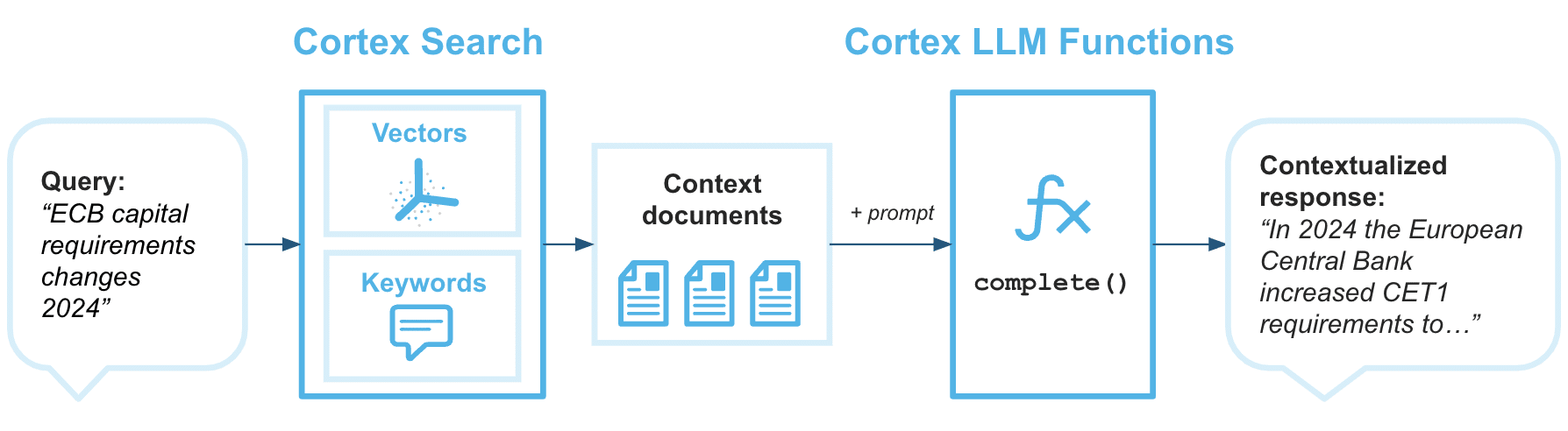

Data Readiness Limits AI Scale

As foundation models reduce the technical barrier to entry, data readiness becomes the primary constraint on enterprise AI success.

Snowflake’s research repeatedly reinforces this point: organizations with governed, high-quality, and well-understood data will scale AI faster—and more safely—than those still addressing basic data challenges.

General-purpose AI is powerful, but enterprise value depends on domain-specific accuracy.

Fine-tuning and domain adaptation allow organizations to customize AI for their business without building models from scratch:

- Fine-tuning no longer requires massive datasets

- Models can be adapted with dozens of high-quality examples

- AI can be tailored to workflows such as M&A data extraction, regulatory analysis, or content moderation

Snowflake also notes that agentic AI is especially sensitive to gaps in enterprise data and undocumented decision logic, making data readiness a prerequisite for autonomy.

Industry Callouts

Financial Services

Fine-tuned models improve accuracy in credit risk, AML, and regulatory reporting; areas where errors carry real consequences.

Healthcare & Life Sciences

Domain-specific AI enhances clinical documentation, trial analysis, and diagnostics, where precision and traceability are essential.

Manufacturing

Fine-tuning supports defect detection, root-cause analysis, and plant-specific optimization.

Private Equity

Domain-adapted AI accelerates deal review, contract analysis, and operational benchmarking across portfolios.

Multimodal and Agentic AI Advance

Multimodal and agentic AI represent the most visible evolution of AI capability. Snowflake describes agentic AI as the shift from systems that generate responses to systems that reason, plan, and act more like coworkers than tools.

- Multimodal AI enables systems to see, hear, read, and reason across inputs.

- Agentic AI executes multi-step workflows, adapts to feedback, and optimizes outcomes over time.

Early enterprise use cases already include:

- AI agents managing customer support end to end

- Systems optimizing inventory and logistics in real time

- Agents coordinating scheduling, reporting, and transactions

Despite rapid progress, Snowflake predicts that 2026 will be defined by measured adoption, not full autonomy. Why?

- Agentic AI amplifies data quality issues

- Errors scale faster when systems take action

- Evaluation, governance, and security become essential

The most successful organizations will deploy agents in bounded, high-confidence scenarios, with humans firmly in the loop.

Industry Callouts

Financial Services

Agentic AI supports customer inquiry triage, fraud alert investigation, and reporting workflows, with human oversight required for regulated decisions.

Healthcare & Life Sciences

Multimodal AI improves clinical documentation and administrative workflows, while agentic use remains limited to non-clinical, low-risk processes.

Manufacturing

Agentic systems optimize scheduling, inventory, and quality monitoring by combining sensor data, images, and operational metrics.

Private Equity

Multimodal and agentic AI accelerates diligence, reporting, and portfolio monitoring, while investment decisions remain human-led.

Physical AI Begins to Emerge

While most enterprise AI progress has focused on software, data, and digital workflows, physical AI and robotics are beginning to show meaningful signs of progress.

The most visible advances are in fully autonomous vehicles. Robotaxi deployments, led by companies like Waymo, represent a major milestone in physical AI: systems that can perceive, reason, and act in complex real-world environments with minimal human intervention. While still geographically constrained, these deployments demonstrate how far perception, planning, and real-time decision-making have advanced.

Drones are another area of rapid improvement. Companies such as Amazon have invested heavily in autonomous drone technology, applying AI to navigation, obstacle avoidance, and delivery logistics. These systems highlight the potential of physical AI in last-mile operations, while also underscoring the challenges of safety, regulation, and real-world variability.

For enterprises, the takeaway is: physical AI is advancing, but still early. Unlike software-based AI, physical systems amplify risk, require extensive testing, and demand high-confidence data. Organizations that succeed in physical AI will be those that invest early in data foundations, governance, and operational readiness, long before autonomy becomes widespread.

Strategic Recommendations

AI is no longer optional. The barrier to entry has fallen, and competitive advantage now depends on execution. The path forward is clear:

Focus on high-value opportunities

Prioritize AI use cases tied directly to business outcomes.

Start small, but design for scale

Launch pilots with clear success metrics while building toward shared platforms.

Invest in data readiness

AI outcomes depend on data quality, governance, and accessibility.

Build AI literacy across the org

Train teams to collaborate with AI, not just use tools.

Treat governance as an enabler

Clear guardrails accelerate adoption by building trust and reuse.

TL;DR

- AI models are now enterprise-ready, not experimental

- AI value will come from ecosystems, not isolated use cases

- 2025 was the year LLM infrastructure matured and standardized

- Data readiness is the biggest limiter to AI adoption at scale

- Domain-specific fine-tuning unlocks accuracy with minimal data investment

- Agentic AI is advancing, but requires strong governance and human oversight

- Organizations that invest in platforms, data, and governance will lead in 2026

Turn Insight Into Impact

AI capabilities are no longer the limiting factor; enterprise readiness is. AI is entering its next phase, defined by scale, governance, and real business impact.

OneSix helps organizations build the data foundations, AI platforms, and governance models required for enterprise adoption. Whether you’re moving beyond pilots or preparing for agentic AI, our experts can help you turn strategy into execution.

Written by

Jacob Zweig, Managing Director

Published

December 17, 2025